Differentiable Forward Rendering in DPIR

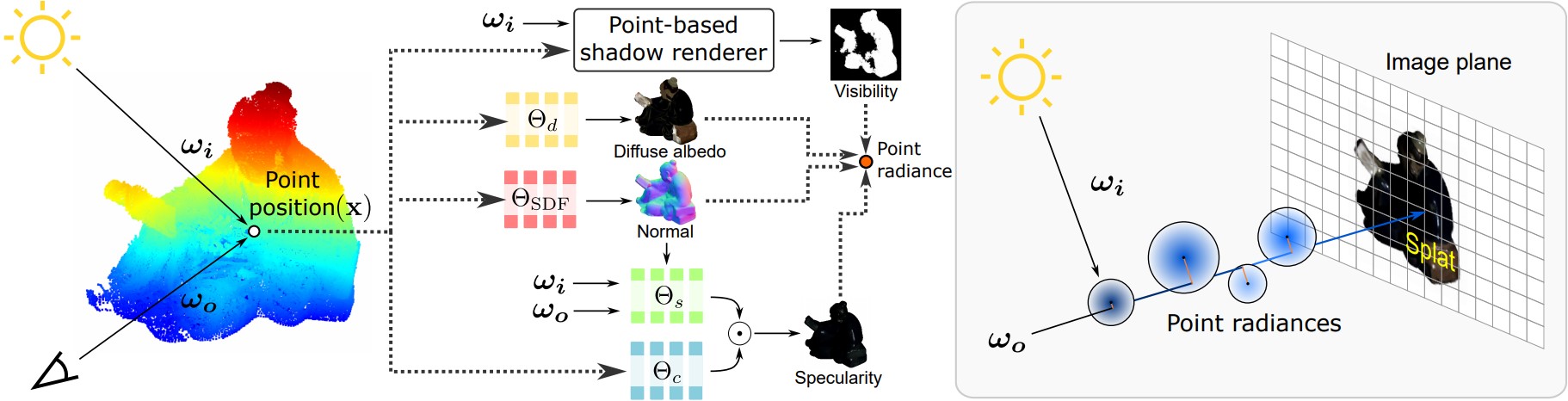

For each 3D point, its position is used as a query for the diffuse-albedo MLP $\Theta_d$, SDF MLP $\Theta_\text{SDF}$, and specular-basis coefficient MLP $\Theta_c$. The specular-basis BRDF MLP $\Theta_s$ models specular-basis reflectance, given the incident and outgoing directions $\boldsymbol{\omega_{i}}$ and $\boldsymbol{\omega_{o}}$. The point-based shadow renderer estimates the point visibility from a light source per each image. By using the diffuse albedo, normals, specular reflectance, and visibility, we compute the radiance for each point. The radiance is then projected onto a camera plane to render the pixel color through splatting-based differentiable forward rendering.